What's next for the Routable API?

Philip Bjorge

| May 4th, 2021

We’re excited to announce that we will be launching a new version of our API in the coming months and we’re making big bets on OpenAPI 3 and Readme as the foundation for this platform.

We released the first version of our API back in November 2018. Since then, we’ve helped dozens of engineering and finance teams streamline their vendor onboarding and business payments. In that time, we’ve evolved the API over a handful of revisions and learned a lot from our customers about what they need in our API product. We’re ready to take the next big step forward. We’re excited to announce that we will be launching a new version of our API in the coming months and we’re making big bets on OpenAPI 3 and Readme as the foundation for this platform. Why?

Like the beginning of many API journeys, ours started by surfacing some of the key APIs that powered our core web application to external developers. It’s important to remember that this was nearly 2 years before our first serious round of funding and we had to be choosy about where we spent our time and effort.

Surfacing our internal APIs and modeling was a great way to get this product out to market quickly, test for product-market fit, and (most importantly) deliver value to our customers. But our data model is not an API and this approach isn’t scaling with us as we, or our developer partners, grow.

The Routable data model

For new hires at Routable, learning our data model is a requirement. For new API customers, learning our data model is an imposition.

Our data model is heavily influenced by the internal details of our application and the evolution of our product. It’s been built from the ground up to support all the features and functionality you’ll find in our dashboard application: CSV imports, matching vendors and customers with your accounting software, and managing your team and their roles. In a way, you can think of the Routable dashboard as an abstraction layer on top of our data model. Our dashboard hides our implementation details so finance teams can focus on their jobs and speed up their business payments.

We owed our API customers a similar abstraction that enables them to focus on advancing their businesses and to spend less time learning about Routable’s implementation details.

Bridges are critical pieces of infrastructure that people build their lives and homes around. Similarly, APIs are critical pieces of infrastructure that businesses build themselves upon.

When businesses build on top of an API, there is an expectation that the API will be stable, reliable, and grow with them. At best, an API that isn’t meeting those expectations is no longer a value add for the business. At worst, it’s a net negative. A key component of a stable, reliable API is managing change, and when you are exposing your internal data model it is difficult to do just that. To deliver new features to our customers like international payments and contact management, we make big, bold changes to our internal data model. These changes can be a problem for API users that are looking for a stable platform to integrate with.

We’ve done our best to insulate our API customers from these changes while supporting new product development – but ultimately, delivery velocity is slowed down for both dashboard and API users.

Missing, incomplete or inaccurate documentation

One piece of feedback we’ve heard loud and clear from our customers is that we need to improve on our documentation.

As part of our commitment to doing better, we launched our developer portal on Readme at the end of March. Readme is a powerful partner which allows us to consolidate all of our guides, tutorials, and best practices in one place.

Reference guides have one job only: to describe. They are code-determined, because ultimately that’s what they describe: key classes, functions, APIs, and so they should list things like functions, fields, attributes and methods, and set out how to use them.–

Reference guides have one job only: to describe. They are code-determined, because ultimately that’s what they describe: key classes, functions, APIs, and so they should list things like functions, fields, attributes and methods, and set out how to use them.–We knew we needed to take a big step forward with our reference documentation and in order to deliver the best product possible we needed a way to generate our reference documentation from an OpenAPI specification. We use JSON:API because our dashboard benefits from the denormalized data and our team benefits from its anti-bikeshedding properties. It helps us move fast and it’s a great specification for building APIs.

Unfortunately, JSON:API is notoriously difficult to describe with OpenAPI (at least in a way that’s understandable for end-users). We’ve also heard feedback that our implementation of it is not particularly amenable to integrating with existing JSON:API clients.

Taking both of these factors into account, we decided to retire JSON:API on our public facing API. This gave us a clear path forward to leveraging OpenAPI and riding the modern API development wave.

How?

Now that we’ve covered the reasons why we’re making this change, let’s dive into how we’re making this change. The how here is really important, because without making changes to how we do things, we’re not likely to deliver on our goals. Like most ambitious projects in software development, it takes a dash of process and a little bit of technology to land smoothly.

Design-driven development

As developers, we were very interested in code first workflows where you build the API first and then generate an OpenAPI specification from the code post-implementation. After a number of prototypes with different technologies and stacks, we realized code first just doesn’t work very well for your needs as API consumers or our needs as API experience engineers.

With a code first approach, we found ourselves creating awkward APIs with incomplete auto-generated OpenAPI specifications. To bring these documents up to par, we had to jump through various hoops, either hooking into framework internals or post processing the generated yaml. Yuck.

Once again we were exposing internal implementation details to our end users. If we renamed a class, moved it to another module, or changed the class hierarchy, a different model definition was generated in our OpenAPI specification. We knew this was going to cause problems even for our own internal use as we moved towards using these specification documents to jumpstart SDK development.

Additionally, with a code first workflow in a dynamic language like Python or Node, you have the flexibility to do all sorts of wild and crazy things with your API request and response models. If you do go wild and crazy, you tend to deliver APIs with inconsistencies, awkward usage patterns and sharp edges that make it difficult to build clients against.

Instead, we embraced design-driven API development –a process where we describe the APIs in an OpenAPI specification before we start writing code. The benefits have been tremendous.

Working within the constraints of OpenAPI tends to drive an API towards simple rather than complex.

We’re able to frontload much of our reference documentation. We can preview the documentation as our end users will see it and see if it passes the “smell test”: “Does this API make sense in the context of our others? Is this easy to understand?”

And the specification ends up being useful for much more than just reference documentation.

We can start up mock servers that run from the spec and actually play with the API to test its usability. We’re excited to use this functionality in the future to streamline SDK testing in our CI/CD pipelines. In addition, we can benefit from code generation on both the client and server.

It’s even improved our security posture: best practices are baked in directly to our process. For example, we’re reviewing and carefully defining schemas for all our responses, explicitly specifying all the parameters and payloads we’re expecting, and validating all this at runtime. All of these practices are considered effective prevention methods for multiple entries of the OWASP API Security Top 10 list including excessive data exposure, mass assignment, and injection. When your OpenAPI spec becomes the single point of truth for your API, it brings much needed clarity and stability to your API development processes. The stack

So, we’d be remiss to skip talking about the technology that enables us here. It’s pretty cool and can serve as inspiration for any other teams looking to move towards a design driven approach to API development.

As far as our stack is concerned, we went with FastAPI and Pydantic to power the API.

Borrowed from their docs, “FastAPI is a modern, fast (high-performance), web framework for building APIs with Python 3.6+ based on standard Python type hints.” We love it because it’s async, has built in support for dependency injection, and seamless integration with Pydantic, a data validation library built on top of Python’s type annotations. Not only are we going all in on OpenAPI, we’re going all in on types! And on the tooling side, we’re deep into the Node ecosystem and leveraging multiple technologies to help us write our descriptions, including Spectral for linting, Prism for mocking, and openapi-cli for previewing as we write.

Let’s see how this all works together in concert to optimize our internal developer experience so we can produce the best possible external developer experience.

Docs and mocks

When writing our openapi documentation, we run openapi preview-docs to start up a redoc watch server. At the end of the day, the bread and butter of API docs are your request and response models—and redoc has the best support for OpenAPI. With practice, you can get away from needing the preview server and can live purely in the YAML file but we still find this useful.

Manual verification is definitely not sufficient when you’re dealing with a multi-thousand line YAML file. To help ensure consistency, we run our specification through the Spectral linter where we’ve used a number of community-designed rulesets, as well as our own custom ones, to help drive consistency in our API.

foo@bar:~$ npm run descriptions:lint

descriptions/v1/examples/companies/create-company-business.yaml

1:7 error oas3-valid-oas-content-example missing required property acting_team_memberNo more inaccurate examples!

We love how it lints our examples and ensures they are up-to-date and accurate.

foo@bar:~$ npm run descriptions:lint

descriptions/v1/examples/companies/create-company-business.yaml

1:7 error oas3-valid-oas-content-example missing required property acting_team_memberNo more inconsistent property names!

It even helps us catch subtle violations like when we don’t name our date attributes by their conventions.

# Rationale: Callers can know whether a field is a date easily

openapi-date-properties-on:

description: OpenAPI date properties should be postfixed with _on

type: style

message: "'{{property}}' is a date and must end in _on"

given: "$.components.schemas.*.properties.[?(@.format=='date')]*~"

then:

field: "@key"

function: pattern

functionOptions:

match: "_on$"Feel free to use this rule in your APIs 🙂

Finally, after our specification is drafted, we can start up a mock server with Prism and validate that the API works in practice. As part of our first specification draft, we actually built out a mini SDK to validate the interfaces!

routable = Routable("http://127.0.0.1:4010/v1", header={"Authorization": "Bearer 12345"})

# Create a Vendor

vance_refrigeration = routable.companies.create(

contacts=[

{

"email": "bob@vancerefrigeration.com",

"first_name": "Bob",

"last_name": "Vance",

"phone_number": "+14155552671",

}

],

is_vendor=True,

name="Vance Refrigeration",

type="business",

)

# Add a bank account

payable_account = routable.companies(vance_refrigeration.hid).payable_accounts.create(

bank={"account_number": "9876543210", "routing_number": "015539113", "type": "checking"},

headers={"Prefer": "example=Bank"},)

# Send an Invite on Kevin's behalf

kevin = routable.settings.team_members(email="kevin@dundermifflin.com").results

routable.companies(vance_refrigeration.hid).invite.create(

acting_team_member=kevin.hid, message="Please accept this invitation ASAP"

)An early SDK prototype

Simplifying code and tests

Where the magic really begins is when we use our specification to drive code generation and testing examples.

On the code generation side, we use datamodel-code-generator to generate incoming request and outgoing response models for our FastAPI project. This ensures that the data coming in and leaving our API is always in compliance with our specification and in turn our documentation. This is great from both a developer experience perspective and security perspective.

On the testing side, we use the examples in our OpenAPI specification to drive our end-to-end tests, ensuring that our examples are always accurate and functional for developers integrating with our API.

def test_personal_vendor_company_flow(self, acting_team_member_id, example_loader, faker, client):

name_uuid = str(uuid4())

create_request = example_loader("companies/create-company-personal")

create_request = acting_team_member_id

create_request = str(uuid4()) + faker.email()

create_request = str(uuid4())

create_request["name"] += f" [{name_uuid}]"

# Can create a new company

create_response = client.post("/v1/companies", json=create_request)

assert create_response.status_code == 201

create_json = create_response.json()

# Retrieve it

retrieve_response = client.get(f'/v1/companies/{create_json}')

assert retrieve_response.status_code == 200

retrieve_json = retrieve_response.json()

assert retrieve_json == create_jsonExamples that drive our tests - a virtuous cycle

When?

This sounds awesome, right? “When can I get access?!” you say? We’re still finalizing our release timelines here but we’re aiming to have beta access available for new and existing customers in the coming months. We’ll keep you posted.

If you’re an engineer reading this and think this sounds awesome, drop us a line at developers@routable.com and check out our careers page to join our awesome team.

Recommended Reading

Developer

From 5 engineers to 50: What a fast-growth team has taught me

A Routable manager shares lessons learned as part of an engineering team that has exploded in size in a short time.

Developer

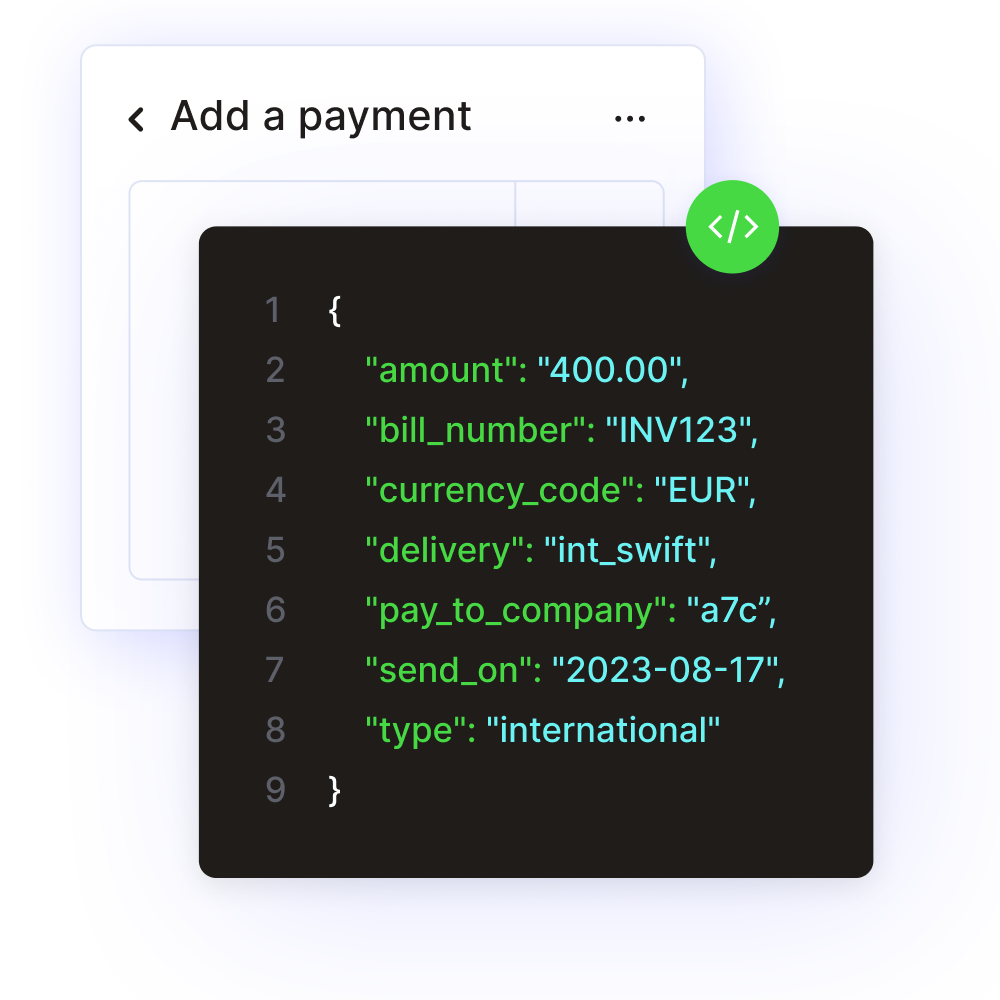

How business payments are like pull requests

Business payments are a lot like pull requests—the mechanism software engineers use to alert their team about changes to code and get it reviewed before it’s deployed.